Amazon Amazon-DEA-C01 Dumps - Pass AWS Certified Data Engineer - Associate Exam in 2026

The Amazon-DEA-C01 exam is the AWS Certified Data Engineer - Associate certification exam from Amazon Web Services. It is designed for professionals who work with data pipelines, analytics workloads, and cloud-based data solutions. This certification validates your ability to build, manage, secure, and support data workflows on AWS. It is a valuable credential for data engineers, analytics engineers, and cloud professionals who want to prove practical AWS data engineering skills.

| # | Exam Topics | Sub-Topics | Approximate Weightage (%) |

|---|---|---|---|

| 1 | Data Ingestion and Transformation | Batch and streaming ingestion, ETL and ELT workflows, data transformation logic, pipeline orchestration | 30% |

| 2 | Data Store Management | Data lake and warehouse concepts, storage selection, partitioning and optimization, lifecycle management | 25% |

| 3 | Data Operations and Support | Monitoring and troubleshooting, job reliability, logging and alerting, operational best practices | 25% |

| 4 | Data Security and Governance | Access control, encryption, data classification, governance and compliance controls | 20% |

The exam tests whether candidates can apply AWS data engineering concepts in realistic scenarios, not just remember definitions. You need a practical understanding of ingestion, storage, operations, and governance across AWS services and workflows. Strong problem-solving skills, attention to data quality, and the ability to choose the right design for each use case are important for success.

How QA4Exam.com Helps You Pass

QA4Exam.com provides Exam PDF material with actual questions and answers, plus an Online Practice Test for the Amazon Amazon-DEA-C01 exam. The content is designed to help you study with realistic exam simulation, so you can get comfortable with the format and question style before test day. The questions are updated to reflect current exam needs, and the verified answers help you review concepts with more confidence. The practice test also helps you improve time management, identify weak areas, and build the speed needed to pass on your first attempt. With both formats, you can prepare in a focused and efficient way.

Frequently Asked Questions

Who should take the AWS Certified Data Engineer - Associate exam?

This exam is for data engineers, analytics engineers, and cloud professionals who work with AWS data solutions and want to validate practical skills in data ingestion, storage, operations, and governance.

Is the Amazon-DEA-C01 exam difficult?

It can be challenging because it focuses on applied knowledge and scenario-based questions. Candidates who understand AWS data workflows and practice with exam-style questions are usually better prepared.

Can I pass with only braindumps?

Braindumps alone are not the best approach. You should combine practice questions with real understanding of the exam topics so you can handle scenario-based questions and make the right choices under pressure.

Do I need hands-on experience to pass?

Hands-on experience is very helpful because the exam tests practical AWS data engineering skills. Even if you study from dumps and a practice test, real experience makes it easier to understand the scenarios in the exam.

Are the QA4Exam.com dumps enough or do I need other resources?

The Exam PDF and Online Practice Test from QA4Exam.com are strong preparation tools, but combining them with topic review and hands-on practice gives you a better chance of passing on the first attempt.

How do the QA4Exam.com practice test and PDF help with first-attempt success?

They help you understand the question pattern, review verified answers, practice under time limits, and identify weak areas before the real exam. This makes your preparation more focused and improves confidence on exam day.

Can I retake the exam if I do not pass?

Retake policies are set by the exam provider, so you should check the latest Amazon Web Services exam rules before scheduling or rescheduling another attempt.

The questions for Amazon-DEA-C01 were last updated on Jun 3, 2026.

- Viewing page 1 out of 59 pages.

- Viewing questions 1-5 out of 294 questions

A company uses Amazon Athena for one-time queries against data that is in Amazon S3. The company has several use cases. The company must implement permission controls to separate query processes and access to query history among users, teams, and applications that are in the same AWS account.

Which solution will meet these requirements?

Athena workgroups are a way to isolate query execution and query history among users, teams, and applications that share the same AWS account. By creating a workgroup for each use case, the company can control the access and actions on the workgroup resource using resource-level IAM permissions or identity-based IAM policies. The company can also use tags to organize and identify the workgroups, and use them as conditions in the IAM policies to grant or deny permissions to the workgroup. This solution meets the requirements of separating query processes and access to query history among users, teams, and applications that are in the same AWS account.Reference:

Athena Workgroups

IAM policies for accessing workgroups

Workgroup example policies

A data engineer is processing a large amount of log data from web servers. The data is stored in an Amazon S3 bucket. The data engineer uses AWS services to process the data every day. The data engineer needs to extract specific fields from the raw log data and load the data into a data warehouse for analysis.

A data engineer is using an Apache Iceberg framework to build a data lake that contains 100 TB of data. The data engineer wants to run AWS Glue Apache Spark Jobs that use the Iceberg framework.

What combination of steps will meet these requirements? (Select TWO.)

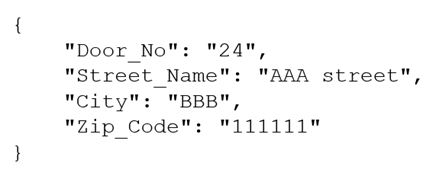

A company receives .csv files that contain physical address data. The data is in columns that have the following names: Door_No, Street_Name, City, and Zip_Code. The company wants to create a single column to store these values in the following format:

Which solution will meet this requirement with the LEAST coding effort?

The NEST TO MAP transformation allows you to combine multiple columns into a single column that contains a JSON object with key-value pairs. This is the easiest way to achieve the desired format for the physical address data, as you can simply select the columns to nest and specify the keys for each column. The NEST TO ARRAY transformation creates a single column that contains an array of values, which is not the same as the JSON object format. The PIVOT transformation reshapes the data by creating new columns from unique values in a selected column, which is not applicable for this use case. Writing a Lambda function in Python requires more coding effort than using AWS Glue DataBrew, which provides a visual and interactive interface for data transformations.Reference:

7 most common data preparation transformations in AWS Glue DataBrew(Section: Nesting and unnesting columns)

NEST TO MAP - AWS Glue DataBrew(Section: Syntax)

A data engineer is optimizing query performance in Amazon Athena notebooks that use Apache Spark to analyze large datasets that are stored in Amazon S3. The data is partitioned. An AWS Glue crawler updates the partitions.

The data engineer wants to minimize the amount of data that is scanned to improve efficiency of Athena queries.

Which solution will meet these requirements?

Unlock All Questions for Amazon Amazon-DEA-C01 Exam

Full Exam Access, Actual Exam Questions, Validated Answers, Anytime Anywhere, No Download Limits, No Practice Limits

Get All 294 Questions & Answers