Databricks Databricks-Machine-Learning-Associate Dumps to Pass the Databricks Certified Machine Learning Associate Exam in 2026

The Databricks Databricks-Machine-Learning-Associate exam is part of the Machine Learning Associate certification track and is designed for candidates who want to validate practical machine learning skills on the Databricks platform. It is a strong fit for learners, data professionals, and aspiring ML practitioners who want to prove they understand the core workflows used in modern machine learning projects. Earning this certification can help demonstrate job-ready knowledge in building, managing, and deploying ML solutions with Databricks. It is also a useful credential for anyone looking to strengthen their credibility in applied machine learning.

Exam Topics Overview

| # | Exam Topics | Sub-Topics | Approximate Weightage (%) |

|---|---|---|---|

| 1 | Databricks Machine Learning | Platform concepts, workspace components, ML tools, core terminology | 25% |

| 2 | ML Workflows | Data preparation, experiment tracking, workflow steps, reproducibility | 25% |

| 3 | Model Development | Feature engineering, training, evaluation, tuning basics | 25% |

| 4 | Model Deployment | Packaging models, deployment concepts, serving considerations, lifecycle management | 25% |

The Databricks-Machine-Learning-Associate exam tests whether candidates can apply practical ML knowledge across the Databricks environment. It focuses on understanding workflows, model development, and deployment concepts rather than only memorizing terms. Candidates should be ready to demonstrate both conceptual knowledge and hands-on familiarity with the platform. Strong preparation helps you answer scenario-based questions with confidence.

How QA4Exam.com Helps You Pass

QA4Exam.com offers Exam PDF materials with actual questions and answers plus an Online Practice Test for the Databricks Databricks-Machine-Learning-Associate exam. These resources help you study with up-to-date questions, verified answers, and a format that mirrors the real exam experience. The practice test is especially useful for building time management skills and getting comfortable with the pressure of answering questions under exam conditions. By reviewing the PDF and practicing online, you can identify weak areas faster and prepare more efficiently. This combination gives you a practical path to passing the exam on your first attempt.

Frequently Asked Questions

It is the Databricks-Machine-Learning-Associate certification exam for candidates who want to validate machine learning knowledge and practical skills related to Databricks Machine Learning, workflows, model development, and deployment.

It is suitable for candidates who have a basic understanding of machine learning and want to prove their ability to work with Databricks concepts and workflows. Some hands-on familiarity is helpful.

Braindumps alone are not the best approach. You should use them as a study aid along with understanding the topics, reviewing explanations, and practicing the workflow and deployment concepts covered in the exam.

Hands-on experience is very helpful because the exam focuses on practical knowledge of Databricks Machine Learning, ML workflows, model development, and model deployment.

QA4Exam.com provides actual questions and answers in PDF form and an Online Practice Test that helps you simulate the exam, improve timing, and review verified answers before test day.

They are highly useful for focused preparation, but the best results come from combining them with topic review and practical understanding of the exam areas.

The exam PDF includes actual questions and answers, while the Online Practice Test provides a realistic way to practice, check your knowledge, and prepare for the Databricks-Machine-Learning-Associate exam format.

The questions for Databricks-Machine-Learning-Associate were last updated on Jun 3, 2026.

- Viewing page 1 out of 15 pages.

- Viewing questions 1-5 out of 74 questions

Which of the following tools can be used to distribute large-scale feature engineering without the use of a UDF or pandas Function API for machine learning pipelines?

Spark ML (Machine Learning Library) is designed specifically for handling large-scale data processing and machine learning tasks directly within Apache Spark. It provides tools and APIs for large-scale feature engineering without the need to rely on user-defined functions (UDFs) or pandas Function API, allowing for more scalable and efficient data transformations directly distributed across a Spark cluster. Unlike Keras, pandas, PyTorch, and scikit-learn, Spark ML operates natively in a distributed environment suitable for big data scenarios. Reference:

Spark MLlib documentation (Feature Engineering with Spark ML).

A data scientist has created two linear regression models. The first model uses price as a label variable and the second model uses log(price) as a label variable. When evaluating the RMSE of each model by comparing the label predictions to the actual price values, the data scientist notices that the RMSE for the second model is much larger than the RMSE of the first model.

Which of the following possible explanations for this difference is invalid?

The Root Mean Squared Error (RMSE) is a standard and widely used metric for evaluating the accuracy of regression models. The statement that it is invalid is incorrect. Here's a breakdown of why the other statements are or are not valid:

Transformations and RMSE Calculation: If the model predictions were transformed (e.g., using log), they should be converted back to their original scale before calculating RMSE to ensure accuracy in the evaluation. Missteps in this conversion process can lead to misleading RMSE values.

Accuracy of Models: Without additional information, we can't definitively say which model is more accurate without considering their RMSE values properly scaled back to the original price scale.

Appropriateness of RMSE: RMSE is entirely valid for regression problems as it provides a measure of how accurately a model predicts the outcome, expressed in the same units as the dependent variable.

Reference

'Applied Predictive Modeling' by Max Kuhn and Kjell Johnson (Springer, 2013), particularly the chapters discussing model evaluation metrics.

A data scientist has developed a machine learning pipeline with a static input data set using Spark ML, but the pipeline is taking too long to process. They increase the number of workers in the cluster to get the pipeline to run more efficiently. They notice that the number of rows in the training set after reconfiguring the cluster is different from the number of rows in the training set prior to reconfiguring the cluster.

Which of the following approaches will guarantee a reproducible training and test set for each model?

To ensure reproducible training and test sets, writing the split data sets to persistent storage is a reliable approach. This allows you to consistently load the same training and test data for each model run, regardless of cluster reconfiguration or other changes in the environment.

Correct approach:

Split the data.

Write the split data to persistent storage (e.g., HDFS, S3).

Load the data from storage for each model training session.

train_df, test_df = spark_df.randomSplit([0.8, 0.2], seed=42) train_df.write.parquet('path/to/train_df.parquet') test_df.write.parquet('path/to/test_df.parquet') # Later, load the data train_df = spark.read.parquet('path/to/train_df.parquet') test_df = spark.read.parquet('path/to/test_df.parquet')

Which of the following describes the relationship between native Spark DataFrames and pandas API on Spark DataFrames?

The pandas API on Spark DataFrames are made up of Spark DataFrames with additional metadata. The pandas API on Spark aims to provide the pandas-like experience with the scalability and distributed nature of Spark. It allows users to work with pandas functions on large datasets by leveraging Spark's underlying capabilities.

Databricks documentation on pandas API on Spark: pandas API on Spark

A data scientist is utilizing MLflow Autologging to automatically track their machine learning experiments. After completing a series of runs for the experiment experiment_id, the data scientist wants to identify the run_id of the run with the best root-mean-square error (RMSE).

Which of the following lines of code can be used to identify the run_id of the run with the best RMSE in experiment_id?

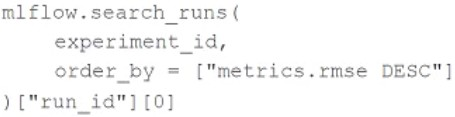

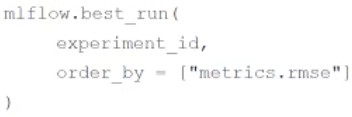

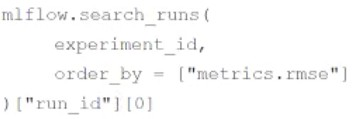

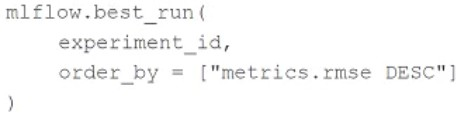

A)

B)

C)

D)

To find the run_id of the run with the best root-mean-square error (RMSE) in an MLflow experiment, the correct line of code to use is:

mlflow.search_runs( experiment_id, order_by=['metrics.rmse'] )['run_id'][0]

This line of code searches the runs in the specified experiment, orders them by the RMSE metric in ascending order (the lower the RMSE, the better), and retrieves the run_id of the best-performing run. Option C correctly represents this logic.

Reference

MLflow documentation on tracking experiments: https://www.mlflow.org/docs/latest/python_api/mlflow.html#mlflow.search_runs

Unlock All Questions for Databricks Databricks-Machine-Learning-Associate Exam

Full Exam Access, Actual Exam Questions, Validated Answers, Anytime Anywhere, No Download Limits, No Practice Limits

Get All 74 Questions & Answers