Linux Foundation CKAD Dumps - Pass Certified Kubernetes Application Developer Exam in 2026

The Linux Foundation CKAD exam, Certified Kubernetes Application Developer, is designed for developers who build, deploy, and maintain applications on Kubernetes. It validates practical skills needed to work with application workloads in real-world cluster environments. This certification belongs to the Kubernetes Application Developer track and is highly relevant for professionals who want to prove hands-on Kubernetes application expertise. Earning CKAD can strengthen your credibility and show employers that you can handle modern cloud-native application tasks.

Exam Topics and Approximate Weightage

| # | Exam Topics | Sub-Topics | Approximate Weightage (%) |

|---|---|---|---|

| 1 | Application Design and Build | Container images, multi-container design, application manifests | 20 |

| 2 | Application Deployment | Deployments, rolling updates, scaling and rollout control | 20 |

| 3 | Application Environment, Configuration and Security | ConfigMaps, Secrets, environment variables, security context | 25 |

| 4 | Services and Networking | Services, DNS access, network exposure, port mapping | 20 |

| 5 | Application Observability and Maintenance | Logs, probes, debugging, resource monitoring | 15 |

The CKAD exam tests your ability to solve Kubernetes application tasks quickly and accurately in a live environment. It focuses on practical skills, configuration knowledge, and the ability to work under time pressure. Candidates must understand how to build, deploy, expose, troubleshoot, and secure applications using Kubernetes resources. Success depends on hands-on proficiency rather than theory alone.

How QA4Exam.com Helps You Pass

QA4Exam.com offers CKAD Exam PDF materials with actual questions and answers, plus an Online Practice Test that helps you prepare with confidence. The PDF gives you a focused way to study verified content, while the practice test simulates the real exam environment so you can build speed and accuracy. Up-to-date questions help you stay aligned with the current exam style and objectives. You can also practice time management, identify weak areas, and improve your readiness before test day. With consistent preparation, these resources can help you aim for a first-attempt pass on the Linux Foundation CKAD exam.

Frequently Asked Questions

1. Who should take the Linux Foundation CKAD exam?

The CKAD exam is for developers and technical professionals who want to prove their ability to build and manage applications on Kubernetes. It is especially relevant for candidates aiming for the Kubernetes Application Developer certification.

2. Is the CKAD exam difficult?

Yes, it can be challenging because it is practical and time-based. You need strong hands-on skills with Kubernetes application tasks, not just general knowledge.

3. Can I pass CKAD with only braindumps?

Braindumps alone are not a complete preparation strategy. You should also practice hands-on Kubernetes tasks, review concepts, and use a practice test to improve readiness and confidence.

4. Do I need hands-on experience before taking CKAD?

Yes, hands-on experience is important because the exam tests practical ability. Working with deployments, services, configuration, security, and troubleshooting will help you perform better.

5. Are QA4Exam.com dumps and practice tests enough to prepare?

They are a strong part of preparation because they provide actual questions and answers, verified content, and a realistic test format. For best results, combine them with practical lab work and review of the CKAD topic areas.

6. How do the QA4Exam.com materials help me pass on the first attempt?

The Exam PDF and Online Practice Test help you study efficiently, understand question patterns, and practice under exam-like conditions. This improves speed, accuracy, and time management for first-attempt success.

7. What format do the QA4Exam.com CKAD materials come in?

The materials are offered as an Exam PDF with questions and answers and as an Online Practice Test. Together, they provide both study convenience and interactive exam simulation.

The questions for CKAD were last updated on Jun 2, 2026.

- Viewing page 1 out of 10 pages.

- Viewing questions 1-5 out of 48 questions

SIMULATION

Task:

Create a Deployment named expose in the existing ckad00014 namespace running 6 replicas of a Pod. Specify a single container using the ifccncf/nginx: 1.13.7 image

Add an environment variable named NGINX_PORT with the value 8001 to the container then expose port 8001

Solution:

SIMULATION

Context

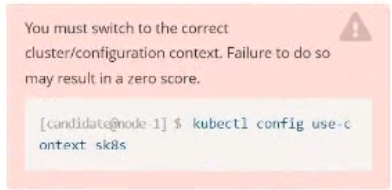

You must connect to the correct host . Failure to do so may result in a zero score.

!

[candidate@base] $ ssh ckad00028

Task

A Pod within the Deployment named honeybee-deployment and in namespace gorilla is logging errors.

Look at the logs to identify error messages.

Look at the logs to identify error messages.

Find errors, including User

"system:serviceaccount:gorilla:default" cannot list resource "pods" [ ... ] in the

namespace "gorilla"

Update the Deployment

honeybee-deployment to resolve the errors in the logs of the Pod.

The honeybee-deployment 's manifest file can be found at

/home/candidate/prompt-escargot/honey bee-deployment.yaml

ssh ckad00028

You're seeing RBAC errors like:

User 'system:serviceaccount:gorilla:default' cannot list resource 'pods' ... in namespace 'gorilla'

That means the Pod is running as the default ServiceAccount and needs permission to list pods (and possibly also get/watch).

You must fix it by updating the Deployment (via its manifest file) and giving it the proper RBAC.

1) Confirm the error in logs

kubectl -n gorilla get deploy honeybee-deployment

kubectl -n gorilla logs deploy/honeybee-deployment --tail=200

If it's CrashLooping and you need previous logs:

POD=$(kubectl -n gorilla get pods -l app=honeybee -o jsonpath='{.items[0].metadata.name}' 2>/dev/null || kubectl -n gorilla get pods -o jsonpath='{.items[0].metadata.name}')

kubectl -n gorilla logs '$POD' --previous --tail=200

You should see the ''cannot list resource pods'' line.

2) Create a dedicated ServiceAccount for the app

(Using a dedicated SA is standard practice; the task wants you to ''resolve the errors''.)

kubectl -n gorilla create serviceaccount honeybee-sa

kubectl -n gorilla get sa honeybee-sa

3) Create RBAC: Role + RoleBinding (namespaced)

This will allow listing pods in namespace gorilla.

cat <<'EOF' > honeybee-rbac.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: honeybee-pod-reader

namespace: gorilla

rules:

- apiGroups: ['']

resources: ['pods']

verbs: ['get', 'list', 'watch']

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: honeybee-pod-reader-binding

namespace: gorilla

subjects:

- kind: ServiceAccount

name: honeybee-sa

namespace: gorilla

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: honeybee-pod-reader

EOF

Apply it:

kubectl apply -f honeybee-rbac.yaml

Quick verification (optional but very useful):

kubectl auth can-i list pods -n gorilla --as=system:serviceaccount:gorilla:honeybee-sa

Should return yes.

4) Update the Deployment manifest to use the new ServiceAccount

The manifest is at:

/home/candidate/prompt-escargot/honey bee-deployment.yaml

Because there's a space in the filename, quote it.

4.1 Edit the file

cd /home/candidate/prompt-escargot

ls -l

vi 'honey bee-deployment.yaml'

In the Deployment YAML, add (or set) this under:

spec.template.spec:

serviceAccountName: honeybee-sa

Example location:

spec:

template:

spec:

serviceAccountName: honeybee-sa

containers:

- name: ...

Save and exit.

4.2 Apply the updated manifest

kubectl apply -f '/home/candidate/prompt-escargot/honey bee-deployment.yaml'

5) Ensure rollout succeeds and errors are gone

kubectl -n gorilla rollout status deploy honeybee-deployment

kubectl -n gorilla logs deploy/honeybee-deployment --tail=200

Also confirm the pods now run with the right ServiceAccount:

kubectl -n gorilla get pods -o jsonpath='{range .items[*]}{.metadata.name}{' sa='}{.spec.serviceAccountName}{'\n'}{end}'

You should no longer see the RBAC ''cannot list pods'' errors.

SIMULATION

You must connect to the correct host . Failure to do so may result in a zero score.

[candidate@base] $ ssh ckad00029

Task

Modify the existing Deployment named store-deployment, running in namespace

grubworm, so that its containers

run with user ID 10000 and

have the NET_BIND_SERVICE capability added

The store-deployment 's manifest file Click to copy

/home/candidate/daring-moccasin/store-deplovment.vaml

ssh ckad00029

You must modify the existing Deployment store-deployment in namespace grubworm so that its containers:

run as user ID 10000

have Linux capability NET_BIND_SERVICE added

And you're told to use the manifest file at:

/home/candidate/daring-moccasin/store-deplovment.vaml (note: the filename looks misspelled; follow it exactly on the host)

1) Inspect the current Deployment and locate the manifest file

kubectl -n grubworm get deploy store-deployment

ls -l /home/candidate/daring-moccasin/

Open the manifest:

sed -n '1,200p' '/home/candidate/daring-moccasin/store-deplovment.vaml'

2) Edit the manifest to add SecurityContext

Edit the file:

vi '/home/candidate/daring-moccasin/store-deplovment.vaml'

2.1 Set Pod-level runAsUser = 10000

Under:

spec.template.spec add:

securityContext:

runAsUser: 10000

2.2 Add NET_BIND_SERVICE capability at container-level

Under the container spec (for each container in containers:), add:

securityContext:

capabilities:

add: ['NET_BIND_SERVICE']

A complete example of what it should look like (mind indentation):

apiVersion: apps/v1

kind: Deployment

metadata:

name: store-deployment

namespace: grubworm

spec:

template:

spec:

securityContext:

runAsUser: 10000

containers:

- name: store

image: someimage

securityContext:

capabilities:

add: ['NET_BIND_SERVICE']

Important notes:

runAsUser can be set at Pod level (applies to all containers) or per-container. Pod-level is cleanest if all containers should run as 10000.

Capabilities must be set per-container (that's where Kubernetes supports it).

Save and exit.

3) Apply the updated manifest

kubectl apply -f '/home/candidate/daring-moccasin/store-deplovment.vaml'

4) Ensure the Deployment rolls out

kubectl -n grubworm rollout status deploy store-deployment

5) Verify the settings are in effect

Check the rendered pod template:

kubectl -n grubworm get deploy store-deployment -o jsonpath='{.spec.template.spec.securityContext}{'\n'}'

kubectl -n grubworm get deploy store-deployment -o jsonpath='{.spec.template.spec.containers[0].securityContext}{'\n'}'

Verify on a running pod:

kubectl -n grubworm get pods

kubectl -n grubworm describe pod

kubectl -n grubworm describe pod

If there are multiple containers

Repeat the container-level securityContext.capabilities.add block for each container under spec.template.spec.containers.

SIMULATION

Context

You are tasked to create a ConfigMap and consume the ConfigMap in a pod using a volume mount.

Task

Please complete the following:

* Create a ConfigMap named another-config containing the key/value pair: key4/value3

* start a pod named nginx-configmap containing a single container using the

nginx image, and mount the key you just created into the pod under directory /also/a/path

Solution:

SIMULATION

Context

Developers occasionally need to submit pods that run periodically.

Task

Follow the steps below to create a pod that will start at a predetermined time and]which runs to completion only once each time it is started:

* Create a YAML formatted Kubernetes manifest /opt/KDPD00301/periodic.yaml that runs the following shell command: date in a single busybox container. The command should run every minute and must complete within 22 seconds or be terminated oy Kubernetes. The Cronjob namp and container name should both be hello

* Create the resource in the above manifest and verify that the job executes successfully at least once

Solution:

Unlock All Questions for Linux Foundation CKAD Exam

Full Exam Access, Actual Exam Questions, Validated Answers, Anytime Anywhere, No Download Limits, No Practice Limits

Get All 48 Questions & Answers