Salesforce Plat-Arch-204 Dumps - Pass Salesforce Certified Platform Integration Architect Exam in 2026

The Salesforce Plat-Arch-204 exam belongs to the Salesforce Architect certification track and focuses on the Salesforce Certified Platform Integration Architect credential. It is designed for professionals who plan, design, and manage integration solutions across systems using Salesforce. This certification matters because it validates your ability to make sound architectural decisions for business-critical integrations. It is a strong choice for candidates who want to prove practical expertise in enterprise integration design and delivery.

| # | Exam Topics | Sub-Topics | Approximate Weightage (%) |

|---|---|---|---|

| 1 | Evaluate the Current System Landscape | Existing applications and data flows; system dependencies; integration constraints | 15% |

| 2 | Evaluate Business Needs | Business goals and priorities; stakeholder expectations; process alignment | 15% |

| 3 | Translate Needs to Integration Requirements | Functional requirements; data movement needs; security and compliance considerations | 20% |

| 4 | Design Integration Solutions | Integration patterns; API strategy; event and batch approach selection | 25% |

| 5 | Build Solution | Implementation planning; configuration and development choices; testing readiness | 15% |

| 6 | Maintain Integration | Monitoring and support; troubleshooting; change management and long-term reliability | 10% |

This exam tests how well candidates can assess an enterprise environment, interpret business needs, and convert those needs into practical integration designs. It also measures knowledge depth in solution planning, implementation considerations, and ongoing maintenance. Candidates should be ready to apply architectural thinking to realistic scenarios, not just memorize terms. Strong practical judgment and integration understanding are important for success.

How QA4Exam.com Helps You Pass

QA4Exam.com offers the Exam PDF with actual questions and answers plus an Online Practice Test for the Salesforce Plat-Arch-204 exam. These materials help you study with up-to-date questions and verified answers so you can focus on the most relevant exam content. The practice test gives you a real exam simulation, which is useful for building confidence and improving time management. With repeated practice, you can identify weak areas faster and prepare more effectively for your first attempt. This combination is designed to make your preparation more focused and practical.

Frequently Asked Questions

Who should take the Salesforce Certified Platform Integration Architect exam?

This exam is for candidates in the Salesforce Architect track who want to validate their ability to design and manage integration solutions. It is suitable for professionals working with enterprise systems, APIs, and data integration planning.

Is the Plat-Arch-204 exam difficult?

Yes, it can be challenging because it tests architectural judgment, integration design, and the ability to apply knowledge to practical scenarios. Preparation with focused exam materials can make the exam much more manageable.

Can I pass with only braindumps?

Braindumps alone are not a complete preparation strategy. You should also understand the concepts behind the questions so you can handle different exam scenarios with confidence.

Do I need hands-on experience to pass?

Hands-on experience is very helpful because the exam focuses on real-world integration decisions. Practical exposure makes it easier to understand system landscape evaluation, solution design, and maintenance topics.

Are the QA4Exam.com dumps and practice test enough for first-attempt preparation?

They are very useful for first-attempt preparation because they provide actual questions and answers, verified answers, and exam-style practice. For best results, use them to reinforce your study and improve your timing.

What format do the QA4Exam.com materials come in?

QA4Exam.com provides an Exam PDF and an Online Practice Test. The PDF helps with review and study, while the practice test helps you simulate the exam environment and manage time better.

If I fail, can I retake the exam?

Retake policies are determined by the exam provider, so you should check the official Salesforce exam rules for the latest retake information. It is best to prepare thoroughly before your first attempt to avoid needing a retake.

The questions for Plat-Arch-204 were last updated on Jun 6, 2026.

- Viewing page 1 out of 26 pages.

- Viewing questions 1-5 out of 129 questions

Universal Containers (UC) is a global financial company. UC support agents would like to open bank accounts on the spot for customers who inquire about UC products. During the bank account opening process, the agents execute credit checks for the customers through external agencies. At any given time, up to 30 concurrent reps will be using the service to perform credit checks for customers. Which error handling mechanisms should be built to display an error to the agent when the credit verification process has failed?

In a synchronous Request-Reply integration---where a bank agent is waiting for a real-time credit check to open an account---the error handling strategy must balance user experience with system resilience. Handling these errors at the Middleware layer is the architecturally preferred solution for managing complex retry logic and providing a clean response to Salesforce.

If the external credit agency's service is momentarily unavailable, the middleware (such as an ESB or MuleSoft) can automatically retry the request multiple times using a pre-defined strategy (e.g., exponential backoff). This 'self-healing' behavior can often resolve transient network issues before the Salesforce agent even realizes there was a problem. If the retries fail, the middleware then returns a structured error message to Salesforce, which is displayed to the agent via the UI.

Option B (Fire and Forget) is unsuitable for this use case because the agent needs the result immediately to proceed with the bank account opening; they cannot afford to wait for a background process to finish hours later. Option C (Mock Service) is a testing tool and has no place in a production environment where real financial decisions are being made. By delegating error management to the middleware, UC ensures that its Salesforce instance remains performant (avoiding long-running request timeo1112uts) while maximizing the chances of a successful credit check through automated, controlled retries.1314

Salesforce users need to read data from an external system via an HTTP request. Which security methods should an integration architect leverage within Salesforce to secure the integration?

To secure outbound HTTP requests from Salesforce, architects must implement defense-in-depth measures at both the authentication and transport layers.

Named Credentials are the primary architectural recommendation for managing callout endpoints and authentication in a secure, declarative manner. They abstract the endpoint URL and authentication parameters (such as usernames, passwords, or OAuth tokens) away from Apex code. This prevents sensitive credentials from being hardcoded or exposed in metadata, significantly reducing the risk of accidental disclosure. By using Named Credentials, Salesforce handles the heavy lifting of authentication headers automatically, ensuring that the integration is both secure and maintainable.

Two-way SSL (Mutual Authentication) provides an additional layer of security at the transport layer. While standard SSL ensures that Salesforce trusts the external server, Two-way SSL requires the external server to also verify the identity of the Salesforce client. The architect first generates a certificate in Salesforce, which is then presented to the external system during the TLS handshake. This 'mutual trust' ensures that the external service only accepts requests from an authorized Salesforce instance, protecting against man-in-the-middle attacks and unauthorized access attempts.

While an Authorization Provider (Option C) is essential for OAuth-based flows, it is typically used within the configuration of a Named Credential rather than as a standalone security method for a generic HTTP request. By combining Named Credentials with Two-way SSL, the architect ensures that the integration is secured at both the session/authentication level and the network/transport level, adhering to enterprise security best practices for cloud-to-on-premise or cloud-to-cloud communication.

A media company recently implemented an IAM system supporting SAML and OpenId. The IAM system must integrate with Salesforce to give new self-service customers instant access to Salesforce Community Cloud. Which requirement should Salesforce Community Cloud support for self-registration and SSO?

To provide 'instant access' for new customers via an external IAM system using SAML, Salesforce provides a declarative feature called Just-in-Time (JIT) provisioning.

When a customer attempts to log in to the Community (Experience Cloud) through the IAM system, the IAM system (acting as the Identity Provider) sends a SAML assertion to Salesforce. If JIT provisioning is enabled, Salesforce parses the user information contained in that assertion---such as name, email, and federation ID. If a corresponding User record does not exist, Salesforce automatically creates one on-the-fly and then logs the user in. This eliminates the need for a manual registration step or pre-provisioning accounts.

Option A is slightly incorrect because Registration Handlers are specifically associated with Authentication Providers (which use OpenID Connect/OAuth), not SAML SSO. Option C is incorrect because JIT provisioning is a feature of SAML, while Authentication Providers use the Registration Handler class to achieve the same result. For a 'self-service' scenario where speed to market and standard protocols are key, SAML SSO with JIT provisioning is the architect's primary choice for automating user management and providing a seamless single-entry point for subscribers.

Northern Trail Outfitters is planning to perform nightly batch loads into Salesforce from an external system with a custom Java application using the Bulk API. The CIO is curious about monitoring recommendations for the jobs from the technical architect. Which recommendation should help meet the requirements?

For high-volume data loads using the Bulk API, monitoring should be performed programmatically by the orchestrating client---in this case, the custom Java application. The Bulk API is asynchronous, meaning that when you submit a job, Salesforce acknowledges the request and processes it in the background.

The Java application must actively track the state of its own jobs. Using the `getBatchInfo` (or `getJobInfo` in Bulk API 2.0) method allows the application to retrieve the real-time status of each batch. The application can check for statuses such as `Queued`, `InProgress`, `Completed`, or `Failed`. Once a batch is marked as `Completed`, the application can then call `getBatchResult` to retrieve a list of successes and failures for individual records.

Option B is architecturally unsound because Bulk API operations are designed to bypass most synchronous Apex logic to ensure performance; furthermore, creating custom records for every error in a 'nightly batch load' would likely hit other platform limits (like storage or CPU) and defeat the purpose of using the Bulk API. Option C is ineffective for Bulk API monitoring, as debug logs do not capture the background processing of bulk batches and would quickly hit the log size limits.

By recommending Option A, the architect ensures that the Java application maintains full control over the integration lifecycle. The application can log errors locally, implement automated retries for transient failures, and provide the CIO with accurate, high-level reporting on the success rate of the nightly loads without placing unnecessary overhead on the Salesforce platform.

---

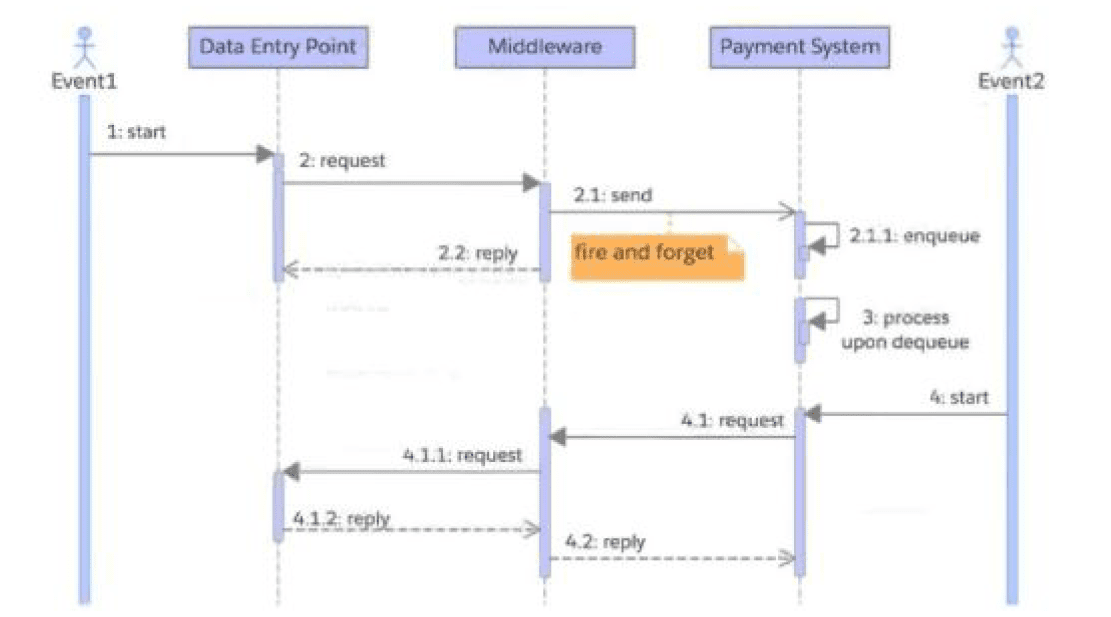

A company accepts payment requests 24/7. Once the company accepts a payment request, its service level agreement (SLA) requires it to make sure each payment request is processed by its Payment System. The company tracks payment requests using a globally unique identifier created at the Data Entry Point. The company's simplified flow is as shown in the diagram.

The company encounters intermittent update errors when two or more processes try to update the same Payment Request record at the same time. Which recommendation should an integration architect make to improve the company's SLA and update conflict handling?

In high-concurrency environments like 24/7 payment processing, a common architectural failure is 'race conditions,' where multiple threads attempt to update the same record simultaneously. To resolve this while strictly adhering to a Service Level Agreement (SLA), the Integration Architect must shift the responsibility of orchestration to a central 'nervous system'---the Middleware (e.g., MuleSoft or an ESB).

According to Salesforce Integration best practices, Middleware coordination is essential for managing the state and sequencing of asynchronous messages. By having the Middleware coordinate request delivery, it can implement a 'Sequential Processing' or 'First-In-First-Out' (FIFO) queue logic. This ensures that even if the Data Entry Point pushes requests at high speed, the Middleware can throttle or serialize the calls to the Payment System, preventing the record-locking errors and update conflicts mentioned in the scenario.

Furthermore, the globally unique identifier created at the Data Entry Point allows the Middleware to perform Idempotency checks. If a duplicate request arrives or an error occurs, the Middleware can use this ID to verify the status before attempting another update, ensuring that the 'exactly-once' processing requirement of the SLA is met without creating duplicate payment records or conflicting status updates.

While Option B suggests retries---which are necessary for a 'Fire-and-Forget' pattern---retrying without central coordination often exacerbates update conflicts rather than solving them. Option C (processing once) is a result of a well-designed system, but it does not provide the mechanism to handle the specific update conflicts described. By recommending that the Middleware coordinate the entire flow, the architect provides a robust solution that manages delivery, handles retries gracefully, and ensures data integrity across the system landscape.

Unlock All Questions for Salesforce Plat-Arch-204 Exam

Full Exam Access, Actual Exam Questions, Validated Answers, Anytime Anywhere, No Download Limits, No Practice Limits

Get All 129 Questions & Answers