Snowflake ARA-R01 Dumps - Pass SnowPro Advanced: Architect Recertification Exam in 2026

The Snowflake ARA-R01 - SnowPro Advanced: Architect Recertification exam is part of the SnowPro Certification track and is designed for experienced Snowflake professionals who want to validate their advanced architecture knowledge. It focuses on the skills needed to design, optimize, secure, and manage Snowflake environments effectively. This recertification exam matters because it helps confirm that your knowledge stays aligned with current Snowflake architecture and best practices. It is especially relevant for architects and technical professionals responsible for real-world Snowflake implementations.

| # | Exam Topics | Sub-Topics | Approximate Weightage (%) |

|---|---|---|---|

| 1 | Data Engineering | Data loading patterns, transformation workflows, pipeline design, data organization | 30% |

| 2 | Performance Optimization | Query tuning, warehouse sizing, caching behavior, performance troubleshooting | 30% |

| 3 | Accounts and Security | Role-based access, account configuration, authentication controls, governance basics | 20% |

| 4 | Snowflake Architecture | Storage and compute separation, virtual warehouses, shared data architecture, workload design | 20% |

This exam tests how well candidates understand Snowflake architecture concepts and how to apply them in practical scenarios. It checks both conceptual depth and the ability to make sound decisions around engineering, performance, and security. Candidates should be ready to analyze situations, choose the best approach, and demonstrate knowledge that reflects real Snowflake work.

How QA4Exam.com Helps You Pass

QA4Exam.com offers Exam PDF material with actual questions and answers, along with an Online Practice Test for the Snowflake ARA-R01 exam. These resources help you study with up-to-date questions and verified answers so you can focus on what matters most. The practice test gives you a real exam simulation, which helps you build confidence and improve time management before test day. By reviewing the exam-style content repeatedly, you can identify weak areas and prepare more effectively for your first attempt. This combination makes it easier to approach the SnowPro Advanced: Architect Recertification exam with a clear strategy.

Frequently Asked Questions

1. Who should take the Snowflake ARA-R01 exam?

This recertification exam is intended for candidates in the SnowPro Certification track who already have advanced Snowflake architecture knowledge and need to validate it again.

2. Is the SnowPro Advanced: Architect Recertification exam difficult?

Yes, it can be challenging because it tests applied knowledge across data engineering, performance optimization, accounts and security, and Snowflake architecture.

3. Can I pass with only braindumps?

Braindumps alone are not the best approach. You should also understand the concepts and use practice tests to improve accuracy and confidence.

4. Do I need hands-on experience to pass ARA-R01?

Hands-on experience is very helpful because the exam focuses on practical Snowflake knowledge and architecture decisions, not just memorization.

5. Are the QA4Exam.com dumps enough, or should I use other resources too?

The QA4Exam.com Exam PDF and Online Practice Test are valuable tools, and combining them with your own review of Snowflake topics can strengthen preparation further.

6. How do the QA4Exam.com practice test and PDF help with first-attempt success?

They help you study actual questions and answers, practice under exam-like conditions, and improve time management so you can perform better on the first attempt.

7. What format do the QA4Exam.com dumps and practice test use?

QA4Exam.com provides an Exam PDF and an Online Practice Test format, giving you flexible ways to review questions, answers, and exam-style practice.

The questions for ARA-R01 were last updated on Jun 3, 2026.

- Viewing page 1 out of 32 pages.

- Viewing questions 1-5 out of 162 questions

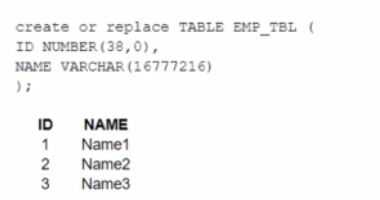

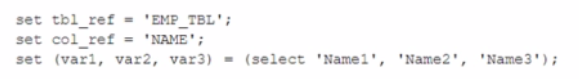

A table, EMP_ TBL has three records as shown:

The following variables are set for the session:

Which SELECT statements will retrieve all three records? (Select TWO).

The correct answer is B and E because they use the correct syntax and values for the identifier function and the session variables.

The identifier function allows you to use a variable or expression as an identifier (such as a table name or column name) in a SQL statement. It takes a single argument and returns it as an identifier. For example, identifier($tbl_ref) returns EMP_TBL as an identifier.

The session variables are set using the SET command and can be referenced using the $ sign. For example, $var1 returns Name1 as a value.

Option A is incorrect because it uses Stbl_ref and Scol_ref, which are not valid session variables or identifiers. They should be $tbl_ref and $col_ref instead.

Option C is incorrect because it uses identifier<Stbl_ref>, which is not a valid syntax for the identifier function. It should be identifier($tbl_ref) instead.

Option D is incorrect because it uses Cvarl, var2, and var3, which are not valid session variables or values. They should be $var1, $var2, and $var3 instead.Reference:

Snowflake Documentation: Identifier Function

Snowflake Documentation: Session Variables

Snowflake Learning: SnowPro Advanced: Architect Exam Study Guide

An Architect is designing a pipeline to stream event data into Snowflake using the Snowflake Kafka connector. The Architect's highest priority is to configure the connector to stream data in the MOST cost-effective manner.

Which of the following is recommended for optimizing the cost associated with the Snowflake Kafka connector?

What Snowflake system functions are used to view and or monitor the clustering metadata for a table? (Select TWO).

The Snowflake system functions used to view and monitor the clustering metadata for a table are:

SYSTEM$CLUSTERING_INFORMATION

SYSTEM$CLUSTERING_DEPTH

Comprehensive But Short Explanation:

The SYSTEM$CLUSTERING_INFORMATION function in Snowflake returns a variety of clustering information for a specified table. This information includes the average clustering depth, total number of micro-partitions, total constant partition count, average overlaps, average depth, and a partition depth histogram. This function allows you to specify either one or multiple columns for which the clustering information is returned, and it returns this data in JSON format.

The SYSTEM$CLUSTERING_DEPTH function computes the average depth of a table based on specified columns or the clustering key defined for the table. A lower average depth indicates that the table is better clustered with respect to the specified columns. This function also allows specifying columns to calculate the depth, and the values need to be enclosed in single quotes.

SYSTEM$CLUSTERING_INFORMATION: Snowflake Documentation

SYSTEM$CLUSTERING_DEPTH: Snowflake Documentation

An Architect needs to design a data unloading strategy for Snowflake, that will be used with the COPY INTO

Which configuration is valid?

For the configuration of data unloading in Snowflake, the valid option among the provided choices is 'C.' This is because Snowflake supports unloading data into Google Cloud Storage using the COPY INTO <location> command with specific configurations. The configurations listed in option C, such as Parquet file format with UTF-8 encoding and gzip compression, are all supported by Snowflake. Notably, Parquet is a columnar storage file format, which is optimal for high-performance data processing tasks in Snowflake. The UTF-8 file encoding and gzip compression are both standard and widely used settings that are compatible with Snowflake's capabilities for data unloading to cloud storage platforms. Reference:

Snowflake Documentation on COPY INTO command

Snowflake Documentation on Supported File Formats

Snowflake Documentation on Compression and Encoding Options

A company needs to have the following features available in its Snowflake account:

1. Support for Multi-Factor Authentication (MFA)

2. A minimum of 2 months of Time Travel availability

3. Database replication in between different regions

4. Native support for JDBC and ODBC

5. Customer-managed encryption keys using Tri-Secret Secure

6. Support for Payment Card Industry Data Security Standards (PCI DSS)

In order to provide all the listed services, what is the MINIMUM Snowflake edition that should be selected during account creation?

Support for Multi-Factor Authentication (MFA): This is a standard feature available in all Snowflake editions

Native support for JDBC and ODBC: This is a standard feature available in all Snowflake editions1.

Therefore, the minimum Snowflake edition that should be selected during account creation to provide all the listed services is the Business Critical edition.

Unlock All Questions for Snowflake ARA-R01 Exam

Full Exam Access, Actual Exam Questions, Validated Answers, Anytime Anywhere, No Download Limits, No Practice Limits

Get All 162 Questions & Answers