Most Recent VMware 3V0-24.25 Exam Dumps

Prepare for the VMware Advanced VMware Cloud Foundation 9.0 vSphere Kubernetes Service exam with our extensive collection of questions and answers. These practice Q&A are updated according to the latest syllabus, providing you with the tools needed to review and test your knowledge.

QA4Exam focus on the latest syllabus and exam objectives, our practice Q&A are designed to help you identify key topics and solidify your understanding. By focusing on the core curriculum, These Questions & Answers helps you cover all the essential topics, ensuring you're well-prepared for every section of the exam. Each question comes with a detailed explanation, offering valuable insights and helping you to learn from your mistakes. Whether you're looking to assess your progress or dive deeper into complex topics, our updated Q&A will provide the support you need to confidently approach the VMware 3V0-24.25 exam and achieve success.

The questions for 3V0-24.25 were last updated on May 1, 2026.

- Viewing page 1 out of 12 pages.

- Viewing questions 1-5 out of 61 questions

An administrator is modernizing the internal HR and payroll applications using vSphere Kubernetes Service (VKS). The applications are composed of multiple microservices deployed across Kubernetes clusters, fronted by Ingress controllers that route user traffic through Avi Kubernetes Operator. During testing, it is discovered that manually creating and renewing TLS certificates for each Ingress resource is error-prone and leads to periodic outages when certificates expire. The requirements also mandate that all application endpoints use trusted certificates issued through the corporate certificate authority (CA) with automatic renewal and rotation.

Which requirement can be met by using cert-manager?

cert-manager addresses the operational risk described (manual creation/renewal causing outages) by making certificate lifecycle management anative, declarative Kubernetes workflow. Instead of treating TLS certificates as manually managed files, cert-manager extends the Kubernetes API with custom resources such asCertificate,Issuer, andClusterIssuer, so certificates and their issuing policies become first-class objects that can be version-controlled and automatically reconciled. This directly satisfies the requirement to usetrusted certificates issued through the corporate CA, because an Issuer/ClusterIssuer can represent that corporate CA integration and define how certificate requests are fulfilled. Once configured, cert-manager continuously monitors certificate validity andautomatically renews and rotatescertificates before expiration, then updates the referenced Kubernetes Secrets so Ingress endpoints remain protected without human intervention. In a vSphere Supervisor / VKS environment, VMware also uses cert-manager on the Supervisor for automated certificate rotation in platform integrations (for example, rotating certificates used by monitoring components), reinforcing the model of automated rotation rather than manual certificate handling.

After upgrading the vSphere Supervisor, an administrator notices that the vSphere Kubernetes Service, configured as a Core Supervisor Service, is stuck in a''Configuring''state.

What should the administrator do to finish the upgrade?

A Supervisor upgrade impacts the lifecycle and compatibility of Supervisor Services (including Core Supervisor Services). VMware guidance emphasizes validating compatibility for the components that depend on the Supervisor and remediating any incompatibilities as part of the overall upgrade process. If a Core Supervisor Service remains stuck in''Configuring''after the Supervisor is upgraded, a common and expected cause is that the service version isnot compatiblewith the upgraded Supervisor/vCenter software state. In those cases, the upgrade workflow expects you toidentify and update incompatible Supervisor Servicesto versions that match the new supported software level. Ensuring the vSphere Kubernetes Service is at asupportedversion (for the upgraded Supervisor) aligns with the documented approach: run compatibility checks and thenupdate the versions of incompatible Supervisor Servicesso they can complete reconciliation and reach a healthy state.

An administrator is building a secure, multi-tenant container registry strategy for their vSphere Kubernetes Services deployment running on VMware Cloud Foundation. Each workload domain hosts a Supervisor Cluster, and multiple development teams require private repositories to store and distribute container images for Kubernetes clusters. The organization enforces strict image security posture due to compliance requirements. The operations team deploys Harbor as an add-on service through the Supervisor control plane, and developers push/pull images from Harbor through Kubernetes manifests.

What requirement describes the role and purpose of Harbor?

Harbor is used as aprivate registry serviceto store and distribute container artifacts for Kubernetes consumption, which is exactly what's needed for a multi-tenant platform where multiple teams require isolated repositories. The VMware documentation treats Harbor as aVMware Tanzu Harbor Registry service, including governance around who can operate it and how teams are separated intoprojects(a key multi-tenancy boundary). For example, vSphere privileges explicitly cover the ability tocreate or delete a Harbor registryand tocreate, delete, or purge Harbor registry projects, reinforcing that Harbor is operated as a managed registry with project-scoped administration and access control.

In practice for regulated environments, the registry role is not just storage---Harbor is commonly used to enforce enterprise controls likepolicy-driven access (RBAC), and it supports security capabilities such asimage vulnerability scanningandimage trust/signing, which directly address the requirement to prevent unsafe images from being promoted or deployed.

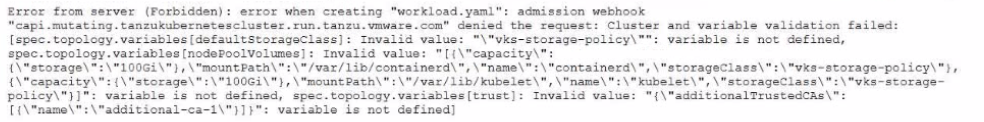

An administrator is upgrading to VKS 3.4 and encounters the following error during cluster creation using workload, yami:

How should the administrator resolve this issue to successfully complete the upgrade"?

The error shows an admission webhook denial wherevariable validation failedand multiple entries under spec.topology.variables3...] are reported as''variable is not defined''. That message indicates the manifest is supplying variables that arenot part of the current Cluster API / topology schemaenforced by the Supervisor during cluster creation. In VKS, cluster provisioning isdeclarative: you invoke the VKS API withkubectl + a YAML file, and ''after the cluster is created, you update the YAML to update the cluster.'' When the API/schema changes between releases, older manifests can contain fields/variables that are no longer recognized, and the admission webhook blocks them to prevent creating an invalid cluster spec.

This aligns with VMware's broader direction that the olderTanzuKubernetesCluster (TKC) API was deprecatedand customers are encouraged to useCluster APIfor bootstrap/config/lifecycle management. In practice, to complete the upgrade/creation successfully, you must update the cluster manifest to match the supported schema:remove the deprecated/unknown topology variablesshown in the error (for example, the undefined storage-policy and trust variables) and re-apply the correctedworkload.yaml.

How should an administrator enable autoscaling for a vSphere Kubernetes Service (VKS) cluster?

In VCF 9.0, cluster autoscaling is delivered as anoptionalcapability that requires installing theCluster Autoscaleras a standard package. The VCF 9.0 materials explicitly call out Cluster Autoscaler as an optionally installed package for vSphere Kubernetes Service, alongside other optional packages (for example, Harbor, Velero, Istio, etc.). The release information further emphasizes that autoscaling features (including newer behaviors such as scaling from/to zero for supported VKr versions) require that ''the autoscaler standard package'' be installed.

Operationally, installing the autoscaler package provides the controller that watches pending pods and node utilization signals and then drives the required changes in desired worker capacity. After that controller is present, you typically express scaling intent through the cluster's declarative configuration (for example, worker pool/node pool constraints and limits) so the autoscaler can act within the boundaries you define. Without the autoscaler package, changing replica counts or expecting automatic node growth/shrink will not produce autoscaling behavior because the control loop that performs those actions is missing.

Unlock All Questions for VMware 3V0-24.25 Exam

Full Exam Access, Actual Exam Questions, Validated Answers, Anytime Anywhere, No Download Limits, No Practice Limits

Get All 61 Questions & Answers